It’s barely two days since President Joe Biden issued an executive order calling for a wide-ranging regulatory framework to ensure that the booming use of artificial intelligence (AI) is conducted in a safe manner.

The Biden order instructs multiple government agencies to set rules and standards for developers with regard to safety, privacy, and fraud. It uses the Defense Production Act to require AI developers to share safety and testing data for models that they train — to prevent any threat to national and economic security.

The administration also plans to develop guidelines for watermarking AI-generated content and set standards to protect against chemical, biological, radiological, nuclear, and cybersecurity risks.

With top analysts such as Eurasia Group founder and author Ian Bremmer warning that disruptive technology poses a greater threat to humanity than climate change, one would assume Biden has reacted to the AI boom in a manner that is rational, responsible and commendable.

ALSO SEE: G7 Agree AI Code of Conduct to Limit Tech Threat Risks

Indeed, many tech titans have voiced concern this year about potential risks of AI systems to society and humanity.

Biden’s order came on the same day that G7 nations agreed to a “code of conduct” for AI companies, with an 11-point plan called the “Hiroshima AI Process.”

But not everyone has welcomed the White House’s latest endeavour.

‘Threat of AI is exaggerated’

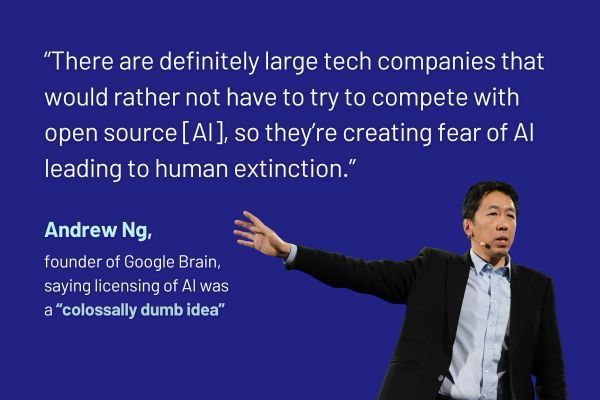

Andrew Ng, one of the founders of Google Brain and a Stanford University professor who taught machine learning to OpenAI co-founder Sam Altman, says large tech companies are exaggerating the risks of AI.

The claim that artificial intelligence could lead to the extinction of humanity was a “bad idea” pushed by Big Tech companies because of their desire to trigger heavy regulation that would reduce competition in the AI sector, Ng said, in an interview with the Australian Financial Review.

Professor Ng said that AI had caused harm, such as self-driving cars killing people and a stock market crash in 2010. And he agreed that it should be subject to “thoughtful regulation”.

Technology companies needed to be transparent to avoid the negative impacts that stemmed from social media over a decade ago.

But he said that imposing burdensome licensing requirements on AI industry was a “colossally dumb idea” that would crush innovation.

“There are definitely large tech companies that would rather not have to try to compete with open source [AI], so they’re creating fear of AI leading to human extinction.”

Ng might be described as an AI optimist. However, many people appear concerned about the speed of these dramatic technological changes, it seems.

AI deepfakes already circulating

Eurasia Group analyst Nick Reiners noted that a voluntary code of conduct by AI developers has now become a legal obligation, enforced by Washington “at an impressive speed by government standards.”

A GZERO AI blog noted on Wednesday that AI-generated media is already circulating and it can be innocuous, such as the image of Pope Francis in a white puffer coat, which went viral earlier this year.

“But it could also be dangerous — experts have warned for years that deepfakes and other synthetic media could cause mass chaos or disrupt elections if wielded maliciously and believed by enough people. It could, in other words, supercharge an already-pervasive disinformation problem.”

AI had also crept into domestic political campaigning in the US, it said.

“In April, the Republican National Committee ran an AI-generated ad depicting a dystopian second presidential term for Joe Biden. (And) in July, Florida governor and presidential hopeful Ron DeSantis used an artificially generated Donald Trump voice in an attack ad against his opponent.

“Google recently mandated that political ads provide written disclosure if AI is used, and a group of US senators would like to sign a similar mandate into law… We already have enough people doubting free and fair elections without the influence of AI.”

- Jim Pollard

NOTE: Graphic added on November 1, 2023.

ALSO SEE:

Nvidia to Stop Some AI Chip Exports to China Immediately

Foxconn, Nvidia to Build ‘AI Factories’ Producing Intelligence

Western Spy Chiefs Warn China Using AI to Steal Tech Secrets

OpenAI Boss Urges Regulations to Prevent ‘Harm to the World’

Beijing Unveils Sweeping Laws to Regulate ChatGPT-Like AI Tech

China Tech Fighting Over AI Talent in ChatGPT Chase – SCMP

Musk, Experts Call for Pause on Training of Powerful AI Systems