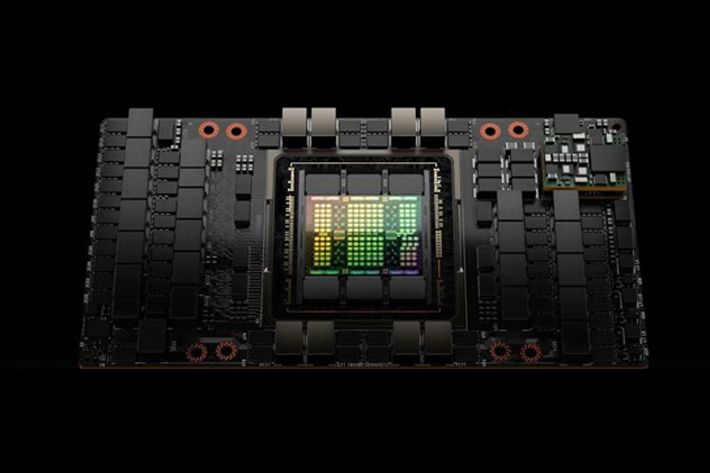

US semiconductor designer Nvidia said on Tuesday it has modified its flagship H100 chip into a version that is legal to export to China.

The new chip, called the H800, is being used by the cloud computing units of Chinese technology firms such as Alibaba Group, Baidu and Tencent, a Nvidia spokesperson said.

“For the young startups, many of them who are building large language models now, and many of them jumping onto the generative AI revolution, they can look forward to Alibaba, Tencent and Baidu to have excellent cloud capabilities with Nvidia’s AI,” Nvidia Chief Executive Jensen Huang told reporters on Wednesday morning, Asia time.

Also on AF: China to Ease Subsidy Access for Chipmakers Amid US Chip War

Nvidia’s move follows sweeping export controls implemented by the United States last year that stopped the company from selling its two most advanced chips, the A100 and newer H100, to Chinese customers.

Such chips are crucial to developing generative artificial intelligence (AI) technologies like OpenAI’s ChatGPT and similar products. Nvidia dominates the market for AI chips.

In November last year, Nvidia had similarly designed a chip called the A800 that reduced some capabilities of the A100 to make the A800 legal for export to China.

A chip industry source in China told Reuters the H800 mainly reduced the chip-to-chip data transfer rate to about half the rate of the flagship H100.

The Nvidia spokesperson declined to say how the China-focused H800 differs from the H100, except that “our 800 series products are fully compliant with export control regulations.”

Last year, US regulators imposed rules to slow China’s development in key technology sectors such as semiconductors and artificial intelligence, citing national security concerns.

The move aimed to hobble China’s chip industry and slow its efforts to modernise its military.

The rules around AI chips imposed a test that bans those with both powerful computing capabilities and high chip-to-chip data transfer rates. Transfer speed is important when training artificial intelligence models on huge amounts of data because slower transfer rates mean more training time.

- Reuters, with additional editing by Vishakha Saxena

Also read:

Nvidia’s Plan for Sales to Huawei at Risk if US Extends Curbs

US Sanctions on China to Hit Dominance of Chips: TSMC Founder

Dutch Set to Curb Exports of Key Chipmaking Machines to China

S Korea Warns US Chips Act Could Backfire, Harm Investment

Money Alone Can’t Rescue China’s Chip Sector, Experts Say

US Chips Act Fund Ban on China Expansion For 10 Years – FT